Lecture 3. POS tagging. Key word and phrase extraction — различия между версиями

Polidson (обсуждение | вклад) (→Using TF-IDF to measure text similarity) |

Katya (обсуждение | вклад) |

||

| Строка 1: | Строка 1: | ||

| + | Ekaterina Chernyak, Dmitry Ilvovsky | ||

| + | |||

== Part of speech (POS) == | == Part of speech (POS) == | ||

Текущая версия на 22:49, 5 ноября 2016

Ekaterina Chernyak, Dmitry Ilvovsky

Содержание

- 1 Part of speech (POS)

- 2 POS ambiguation

- 3 POS taggers

- 4 Exercise 3.1 Genre comparison

- 5 Key word and phrase extraction

- 6 Supervised methods for key word and phrase extraction

- 7 Unsupervised methods for key word and phrase extraction from a single text

- 8 Bigram association measures

- 9 TextRank: using graph centrality measures for key word and phrase extraction [Mihalcea, Tarau, 2004]

- 10 Unsupervised methods for key word and phrase selection from a text in a collection

- 11 Variants of TF and IDF weights

- 12 TF-IDF in NLTK

- 13 TF-IDF alternatives

- 14 Using TF-IDF to measure text similarity

Part of speech (POS)

Part of speech [Manning, Shuetze, 1999]

Words of a language are grouped into classes which show similar syntactic behavior. These word classes are called parts of speech (POS). Three important parts of speech are noun, verb, and adjective. The major types of morphological process are in ection, derivation, and compounding.

There are around 9 POS according to different schools:

- Nouns (NN, NP), pronouns (PN, PRP), adjectives (JJ): number, gender, case

- Adjective (JJ): comparative, superlative, short form

- Verbs (VB): subject number, subject person, tense, aspect, modality, participles, voice

- Adverbs (RB), prepositions (IN), conjunctions (, CS), articles (AT)

and particles (RP): nothing

POS ambiguation

Ship (noun or verb?)

- a luxury cruise ship

- Both products are due to ship at the beginning of June

- A new engine was shipped over from the US

- The port is closed to all shipping

Contest (noun or verb?)

- Stone decided to hold a contest to see who could write the best song.

- She plans to contest a seat in Congress next year.

POS taggers

- Corpus- or dictionary-based VS rule-based

- Ngram-based taggers:

- unigram tagging: assign the most frequent tag

- ngram tagging: look at the context of n previous words (requires a lot of training data)

- Trade-off between the accuracy and the coverage: combine different taggers

NLTK POS default tagger

In[1]: from nltk.tag import pos tag

In[2]: print pos tag(['ship'])

Out[1]: [('ship', 'NN')]

In[3]: print pos tag(['shipping'])

Out[2]: [('shipping', 'VBG')]

See [1] for more details on learning taggers.

Exercise 3.1 Genre comparison

Text genre [Santini, Sharoff, 2009]

The concept of genre is hard to agree upon. Many interpretations have been proposed since Aristotles Poetics without reaching any definite conclusions about the inventory or even principles for classifying documents into genres. The lack of an agreed definition of what genre is causes the problem of the loose boundaries between the term \genre" with other neighbouring terms, such as "register", "domain", "topic", and "style".

Exercise 3.1

Input: Two texts of different genre (for example, Wikipedia article and blog post) Output: rank all of POS tags for both texts

How can you describe the difference between two genres?

Key word and phrase extraction

There are many definitions of key word and phrase. Thus there are many methods for their extraction:

- supervised VS unsupervised

- frequency-based VS more complex

- from individual text VS from text collection

- word (unigram) VS bigram VS ngram

- term VS named entity VS collocation

- sequential words VS using window

Supervised methods for key word and phrase extraction

I am a word. Am I a key word? Let us build a classifier.

- Am I in the beginning or in the end of the sentence?

- Am I capitalized?

- How many times do I occur?

- Am I used in Wikipedia as a title of a category or an article?

- Am I a term?

- Am I a NE?

- etc.

But we need a collection of marked up texts!

Unsupervised methods for key word and phrase extraction from a single text

- POS patterns

- Association measures: PMI, T-Score, LLR

- Graph methods: TextRank [Mihalcea, Tarau, 2004]

- Syntactic patterns

Exercise 3.2

Input: sif1.txt (or your own text)

Key word: top n1 NN

Key phrase: top n2 phrases, that satisfy the following patterns: JJ + NN, NN + NN, NN + IN + NN

Output: list of key words and phrases

Hint: use nltk.ngrams to get ngrams.

Bigram association measures

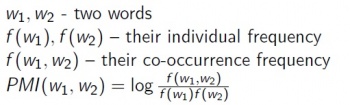

Pointwise Mutual Information [Manning, Shuetze, 1999]

PMI measures the reduction of uncertainty about the occurrence of one word when we are told about the occurrence of the other one.

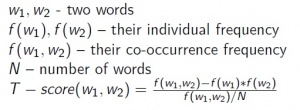

T-Score [Manning, Shuetze, 1999]

T-Score is a statistical t-test applied to finding collocations. The t-test looks at the difference between the observed and expected means, scaled by the variance of the data. The T-score is most useful as a method for ranking collocations. The level of significance itself is less useful.

But Chi-squared has many other interesting applications.

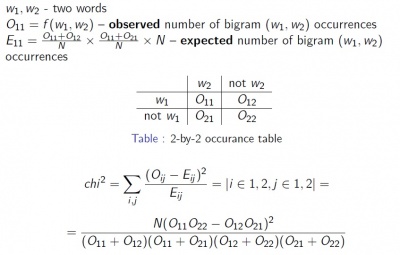

Chi-squared [Manning, Shuetze, 1999]

In general, for the problem of finding collocation, the difference between the T-score and the Chi-squared does not seem to be large.

Bigram association measures in NLTK

NLTK BigramCollocationFinder

In[1]: from nltk.collocations import *

In[2]: bigram measures = nltk.collocations.BigramAssocMeasures()

In[3]: finder = BigramCollocationFinder.from words(tokens)

In[4]: finder.apply freq filter(3)

In[5]: for i in finder.nbest(bigram measures.pmi, 20):

...

Bigram measures:

-

bigram measures.pmi -

bigram measures.student_t -

bigram measures.chi_sq -

igram measures.likelihood_ratio

See [2] for more more bigram association measures.

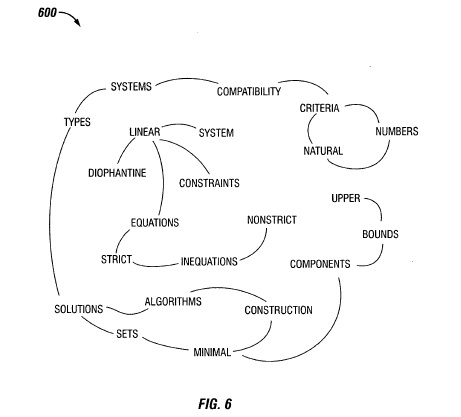

TextRank: using graph centrality measures for key word and phrase extraction [Mihalcea, Tarau, 2004]

- Add words as vertices in the graph.

- Identify relations that connect words:

- consequent words;

- words inside (left or right) the window (2-5 words); and use these relations to draw edges between vertices in the graph. Edges can be directed or undirected, weighted or unweighted.

- Iterate the graph-based ranking algorithm until convergence (for example, PageRank).

- Sort vertices based on their final score. Use the values attached to each vertex for ranking/selection decisions.

- If two adjacent words are selected as potential keywords by TextRank, collapse them into one single key phrase.

See original paper: [3]

Compatibility of systems of linear constraints over the set of natural numbers. Criteria of compatibility of a system of linear Diophantine equations, strict inequations, and nonstrict inequations are considered. Upper bounds for components of a minimal set of solutions and algorithms of construction of minimal generating sets of solutions for all types of systems are given. These criteria and the corresponding algorithms for constructing a minimal supporting set of solutions can be used in solving all the considered types systems and systems of mixed types.

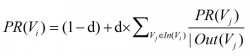

G = (V, E) — a graph, V — vertices, E — edges

In(Vi) — the set of vertices that point to it

Out(Vi) — the set of vertices that Vi points to

Graph centrality measures:

PageRank [Brin, Page, 1998]

HITS [Kleinberg, 1999]

Key words and phrases, assigned by TextRank using PageRank centrality measure: linear constraints; linear diophantine equations; natural numbers; nonstrict inequations; strict inequations; upper bounds

Exercise 3.3

Input: sif.txt (or your own text) Output: key words and phrases computed by PageRank (using PR(Vi),HITSA(Vi) and HITSH(Vi) as centrality measures)

Hint: use NetworkX for PageRank and HITS [4]

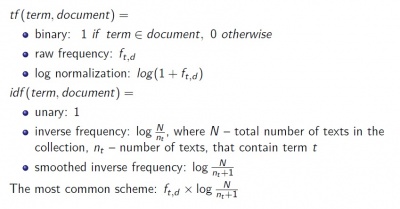

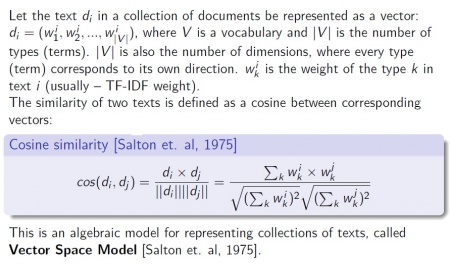

Unsupervised methods for key word and phrase selection from a text in a collection

The problem: given a collection of texts find those words and phrases (terms) that occur in this text significant frequently than in other texts.

Term frequency [Luhn, 1957]

The weight of a term that occurs in a document is simply proportional to the term frequency.

Inverse document frequency [Spaerck Jones, 1972]

The specificity of a term can be quantified as an inverse function of the number of documents in which it occurs.

tfidf (term, text, collection) = tf (term, document) × idf (term, collection)

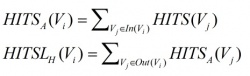

Variants of TF and IDF weights

TF-IDF in NLTK

NLTK TextCollection class

In[1]: from nltk.text import TextCollection

In[2]: collection = [WhitespaceTokenizer().tokenize(text) for text in collection]

In[3]: corpus = TextCollection(collection)

In[4]: for i in collection[0]: print i, corpus.tf idf(i,collection[0])

Exercise 3.4

Input: sif2.txt (or your own collection of texts)

Output: list of key words and phrases according to TF-IDF for one text

Hint: use sorted(mylist,key=lambda l:l[1], reverse=True) to sort mylist in descending order based on the second parameter

TF-IDF alternatives

Exercise 3.5

Write MI and Chi-squared as an alternative for TF-IDF for measuring significance of a term in a text in a collection. Check your ideas in Sebastiani[5], 2001